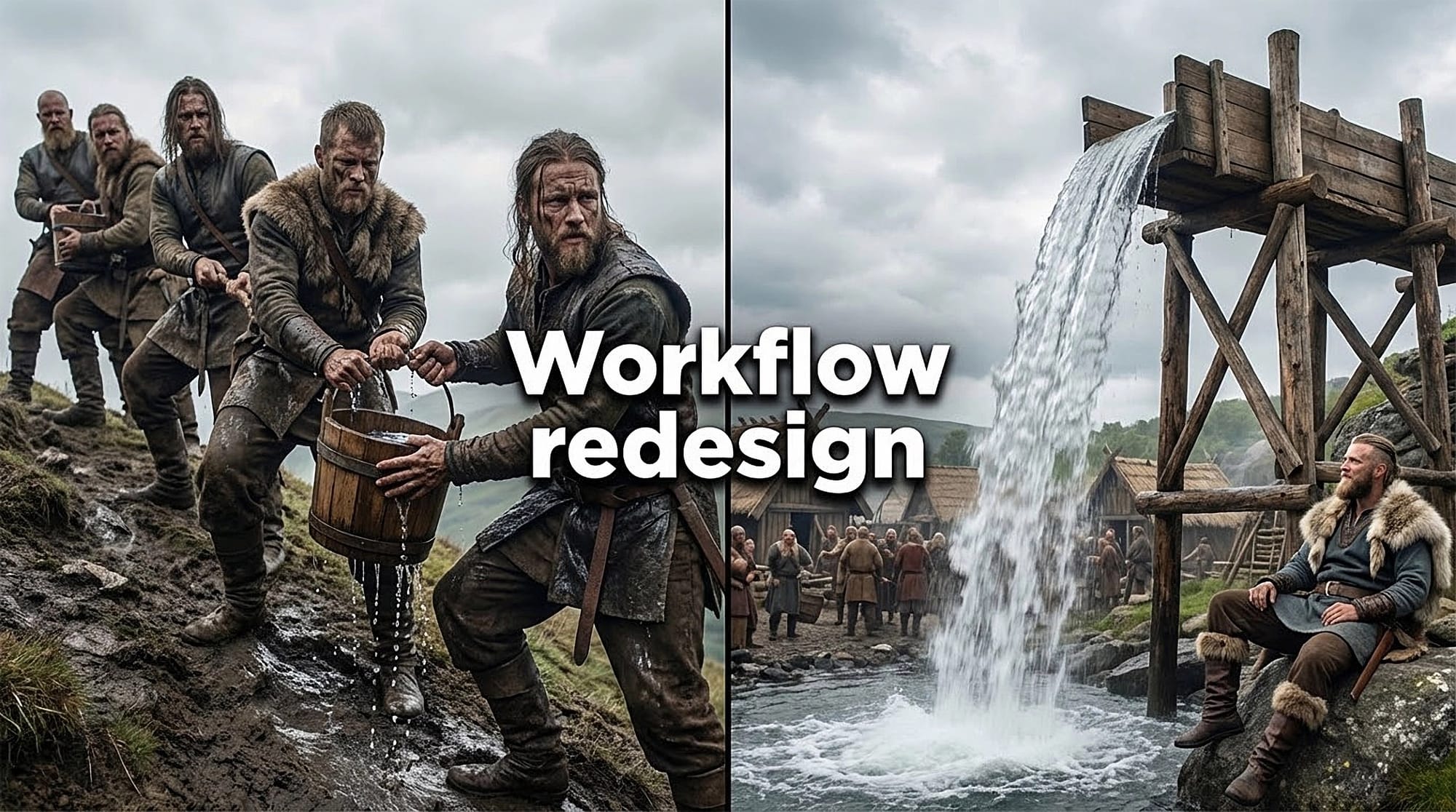

15-second webcam clip, three angles, and phoneme-level lip sync. That's what HeyGen used to build Avatar V, their latest avatar model that separates identity from appearance. Record once and the avatar keeps the same micro-expressions and gestures across wide, medium and close-up frames, so a pair of glasses, a smile, or a pause reads the same in a 30-second clip or a 30-minute lesson.

This matters if you make training, marketing, or localisation videos. Avatar V trains on a full video context window, reduces identity drift, and delivers accurate lip-sync in 175+ languages without a studio or hours of footage. You get multi-angle output and long-form consistency from a single webcam recording.

Record 15 seconds; you'll get a digital twin that moves like you.

Discussion